It could be an API, CSVs, XML file and many more. All the vendors would have different ways to provide you data. Well let's take an example, the data science team needs temperature data for 10 locations from 10 different vendors. This final landing is where the analyst/scientist/ML Ops can pick up the data for use for whatever jobs they have to do, be it dashboarding, exploratory modeling, or pipelining into production models. to ensure the data is as internally-consistent as we can get it.ģ) LOAD the data into our fhir store with the appropriate mappings and identifiers.Īt this point, we have another similar-ish ETL pipeline that takes the data out of the fhir store and massages it into some flattened tables for ease of querying. This is done for customers, observations, encounters, diagnoses, etc. and the same point-of-sale but the address changed, it's probably the same customer who moved, etc). Doe all have the same date of birth and same address, they're probably the same customer. We have a batch ETL pipeline that does the following:ġ) EXTRACTs the new HL7v2 messages from these tables.Ģ) TRANSFORMs the data by parsing apart the message, identifying what type of message it is, mapping the HL7v2 fields to the appropriate fhir fields, running some consistency checks and fuzzy-matching & other logic to align our business' internal customer identifiers (i.e if John Doe, John C. They are extraordinary difficult to use in these tables. These get dumped (basically raw with some attached metadata) into some tables, because you gotta store them somewhere. There's a couple of examples here (as well as some people who shouldn't have commented lol), but here's an extremely practical example and extremely high level overview of how we use ETL at my healthcare company.Įvery hour we receive a huge pile of HL7v2 messages (healthcare standard) from our various partners.

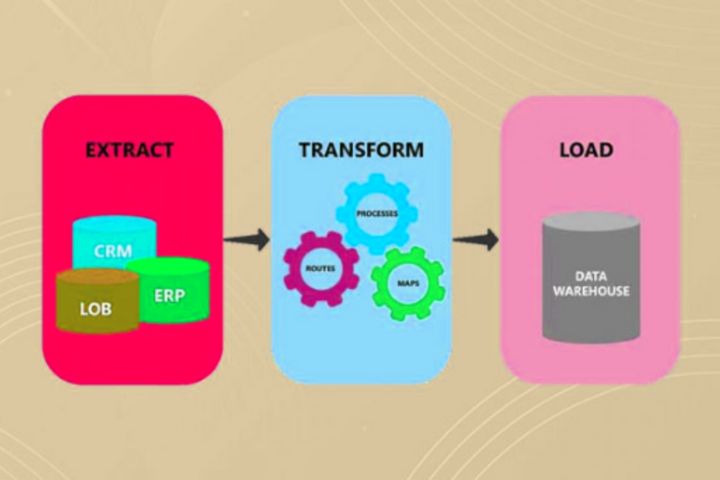

After a few iterations, the data science team has determined which parts of the data are valuable, and build data transformations on top of the data in the data lake for their workflows. You build an ELT pipeline that extracts the raw data from the third party vendor and loads it into the data make. Therefore, you deem its okay to dump this data into a data lake and give the data science team access to explore. Since this data is for R&D purposes, there is no defined business logic available. While this third party data is useful, the vendor provides extremely messy tables. Your organization purchases third party data from a vendor to supplement your organization's data. You build an ETL pipeline to replicate data from the transactional database to the analytical database, including some data transformations to make it easier to use. Thus, you decide to replicate the data on an database designed specifically for analytics (OLAP) to power these heavy queries while not risking the production transactions database. Currently, the org only has a transactional database (OLTP) for the product, and running these heavy analytical queries runs the risk breaking the transactional database for the product. Your organization is starting to have more questions about about historical trends and patterns of your data. Here are two examples of ELT/ETL in action. There are vendors available, such as Fivetran or Portable, that have pre-built connections that they manage. ETL (Extract, Transform, Load) is a design pattern for data architecture with variations such as ELT or EtLT.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed